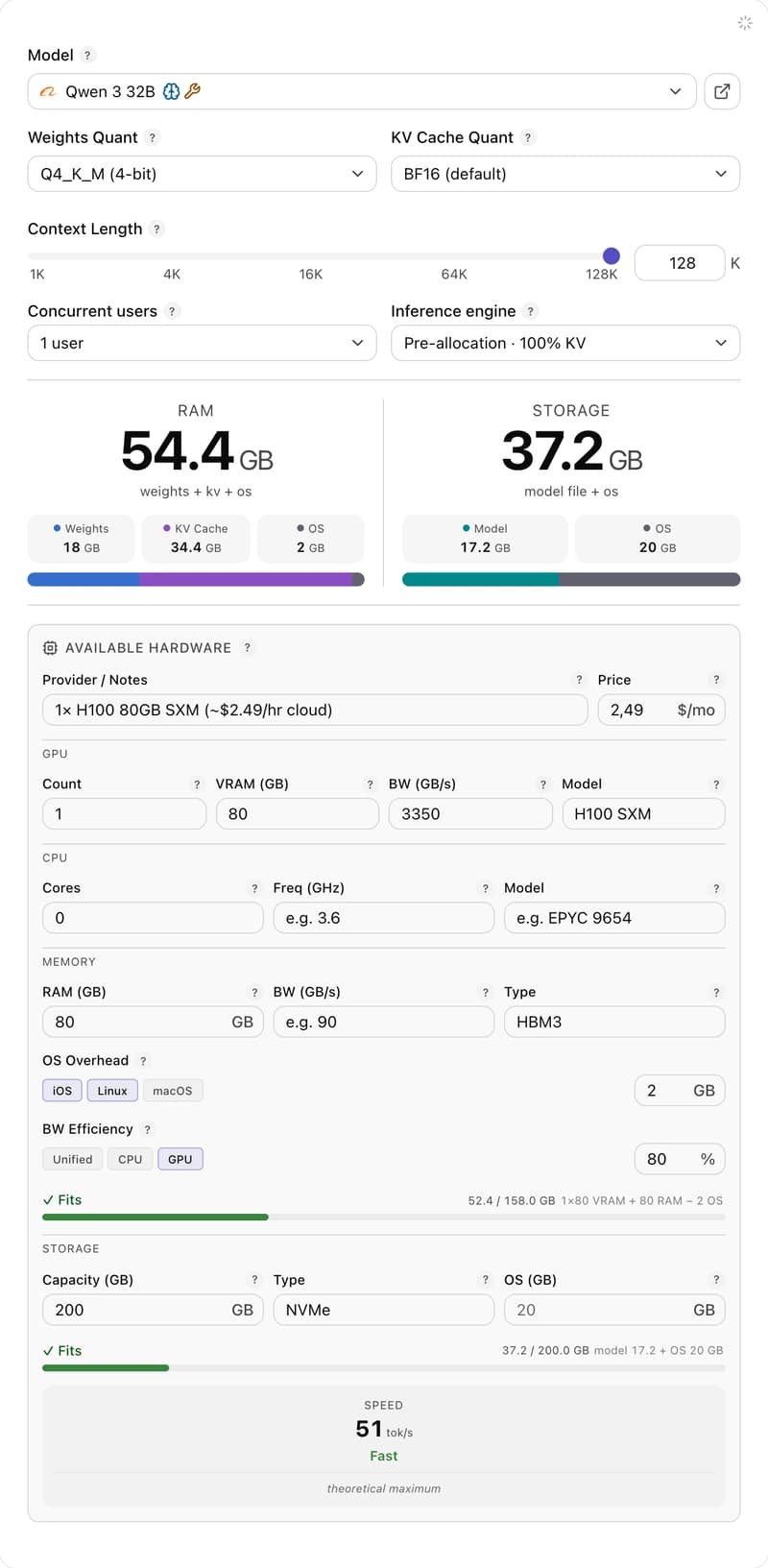

Hey LLM folks 👋 Whether you're shipping with Ollama on a Mac, vllm on 8× H100, or anything in between — model planning shouldn't be napkin math. I built a free calculator: WeightRoom. → https://smelukov.github.io/WeightRoom/ No backend, no signup, no telemetry. Pick a model, pick a quant, pick a context window — get RAM, disk and TPS estimates in real time. State serializes to URL, so configs are

WeightRoom — an LLM resource calculator

Sergey Melyukov·Dev.to··1 min read

D

Continue reading on Dev.to

This article was sourced from Dev.to's RSS feed. Visit the original for the complete story.