Running a local LLM has typically required you to either have some pretty beefy hardware, or to buy dedicated hardware if you didn't have it already. A decent GPU has typically been a minimum requirement, or at least a mini PC like a Mac Studio, Mac Mini, or one of those 128GB DGX-adjacent workstations. What it didn't mean, until pretty recently, was pulling the phone out of your pocket and asking it to do the thinking for your entire smart home.

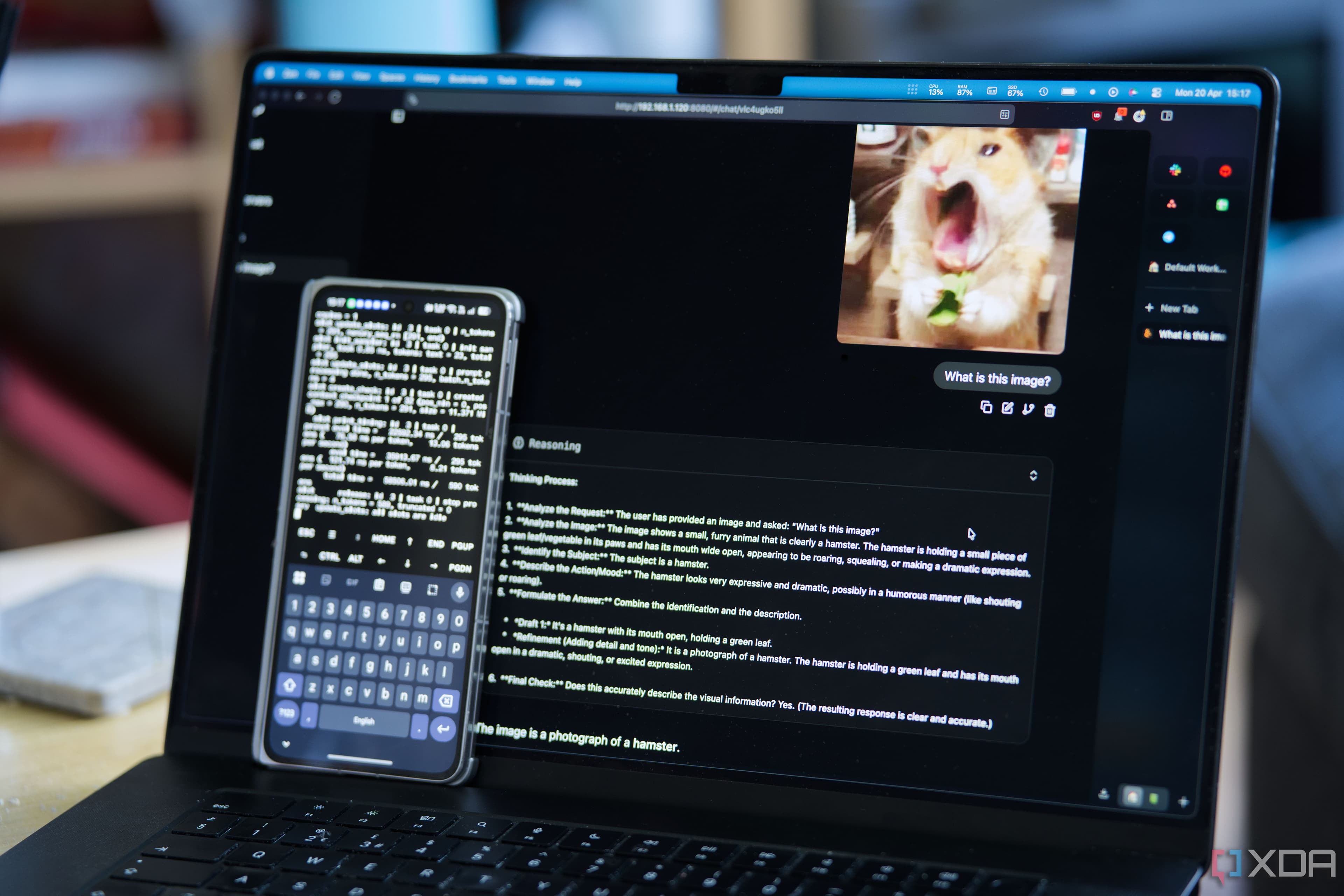

I turned my phone into a local LLM server, and it handles vision, voice, and tool calls

Adam Conway·XDA Developers··1 min read

X

Continue reading on XDA Developers

This article was sourced from XDA Developers's RSS feed. Visit the original for the complete story.