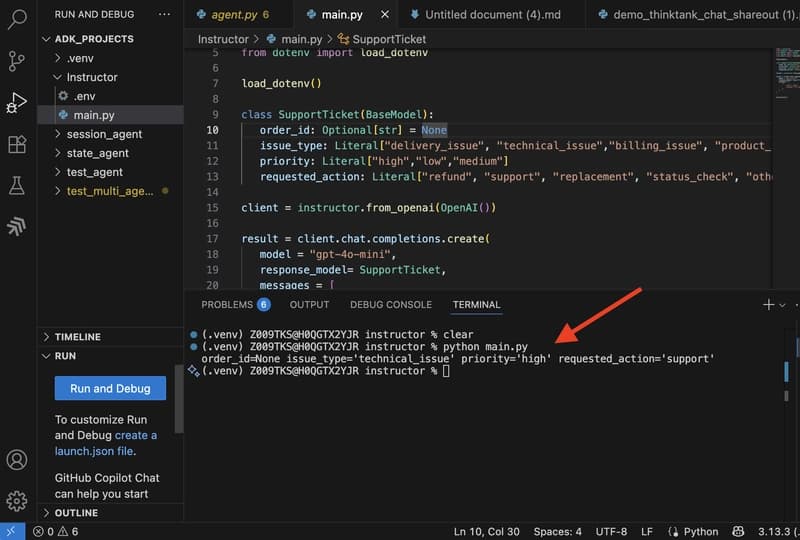

LLMs generate text by predicting the next best token. Pass the same prompt twice and you might get two different outputs. Sometimes it's a clean JSON object.

Sometimes it's the same data wrapped in markdown fences and a paragraph of explanation you didn't ask for. Often, you don't need the full response. You just need specific parts of it to feed into an API, store in a database, or pass to the ne