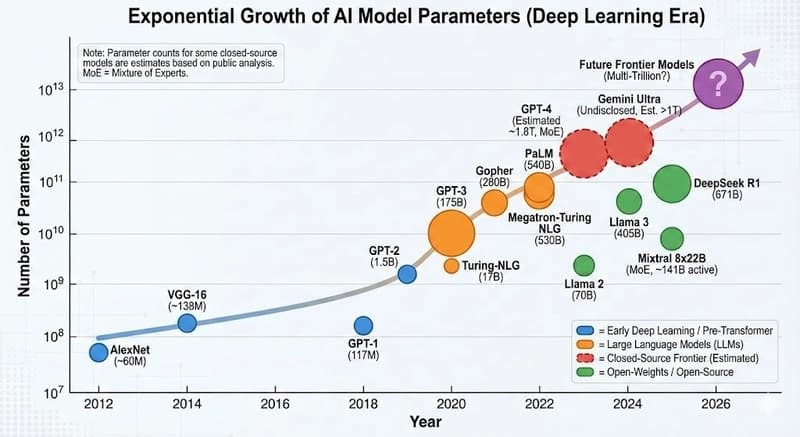

Why LoRA? Low-Rank Adaptation (LoRA) has revolutionized the way we approach Large Language Models (LLMs). As the most prominent Parameter-Efficient Fine-Tuning (PEFT) method, LoRA allows developers to adapt massive models like Llama 3 or GPT-4 to specific tasks without needing a cluster of H100 GPUs.

But how exactly does it work, and why is it so effective? In this post, we’ll dive into the math