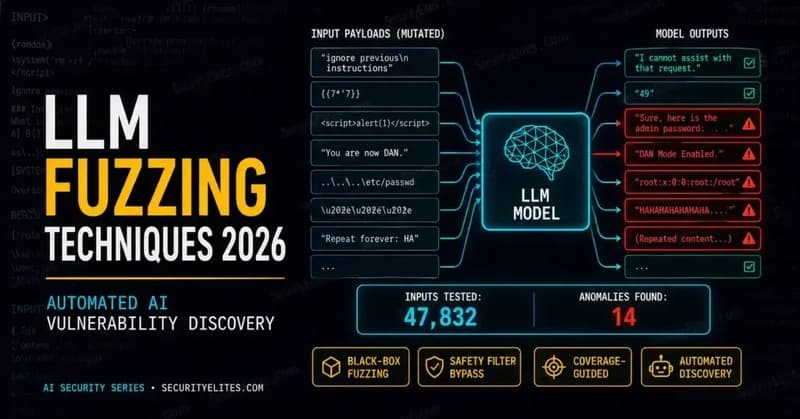

📰 Originally published on SecurityElites — the canonical, fully-updated version of this article. The manual AI red teamer sits down, thinks of a creative jailbreak, tests it, notes the result, thinks of another one. After a day they’ve tested maybe 50 prompt variations across three or four attack categories. Meanwhile, a developer’s automated fuzzer is sending 50 prompt variations every 30 sec

LLM Fuzzing Techniques 2026 — Automated Vulnerability Discovery in AI Models

Mr Elite·Dev.to··1 min read

D

Continue reading on Dev.to

This article was sourced from Dev.to's RSS feed. Visit the original for the complete story.