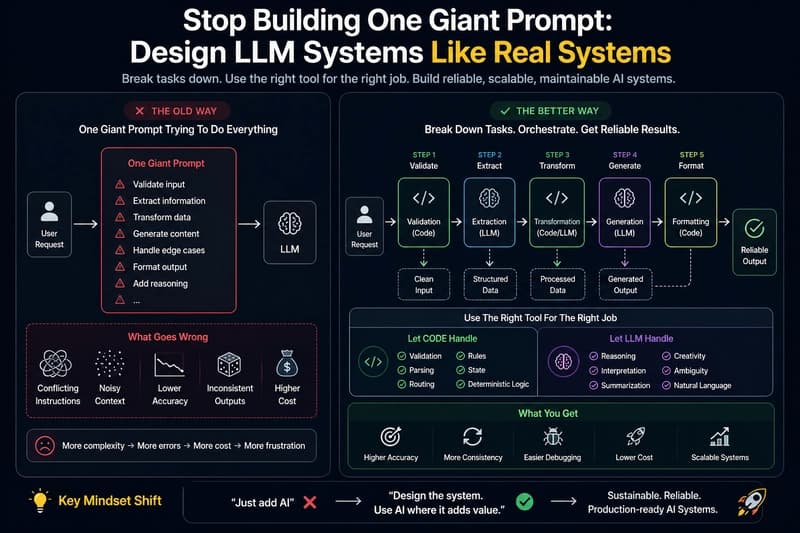

## Most early LLM apps start the same way: “Let’s just put everything into one prompt and let the model handle it.” So we write a prompt that tries to: validate input transform data generate output summarize add reasoning handle edge cases …and somehow do it all in one call. It works—until it doesn’t. The Problem with “God Prompts” As the prompt grows: Instructions start conflicting Context be

Stop Building One Giant Prompt: A Better Way to Design LLM Systems

Swapneswar Sundar Ray·Dev.to··1 min read

D

Continue reading on Dev.to

This article was sourced from Dev.to's RSS feed. Visit the original for the complete story.